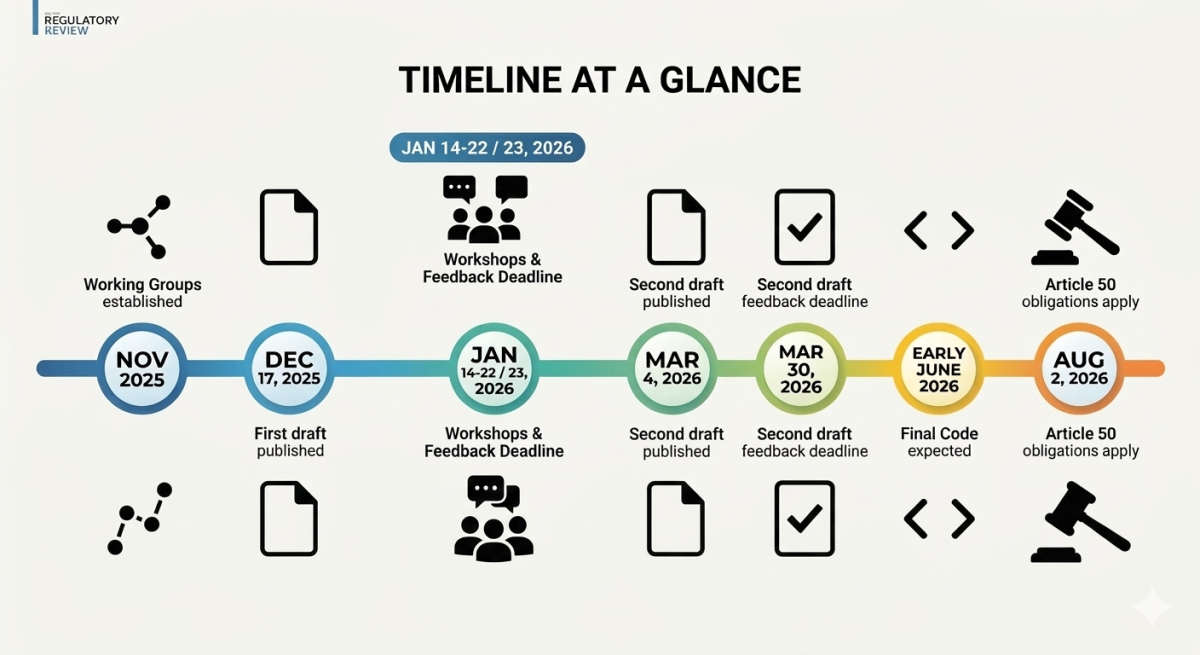

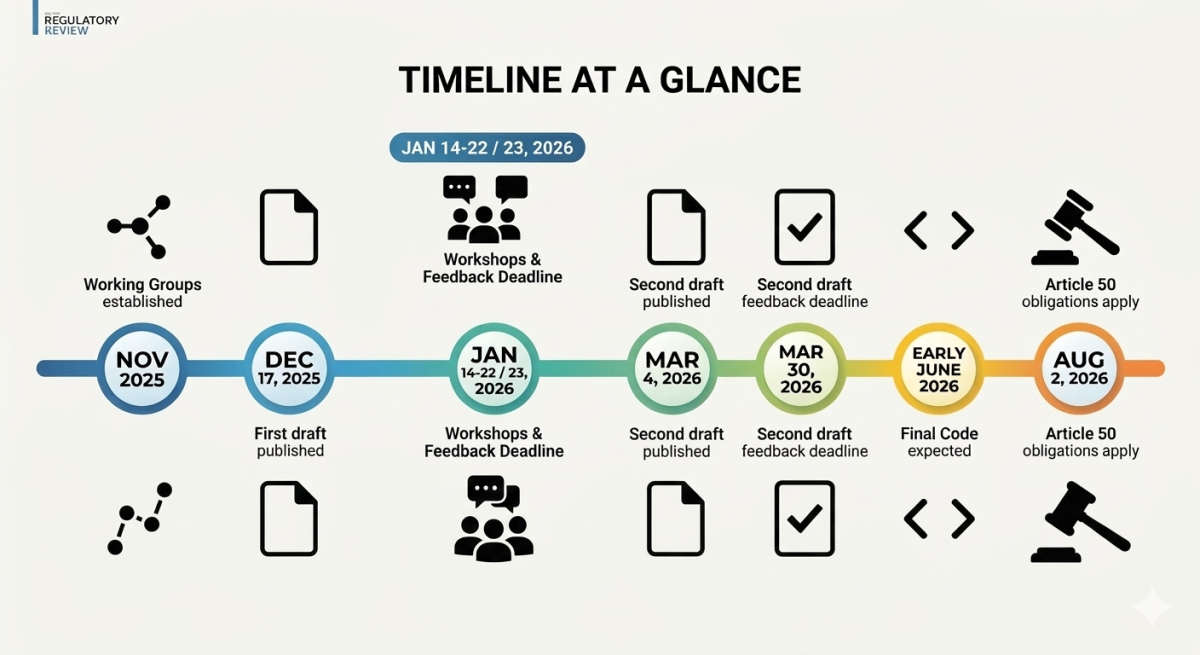

The European Commission published the second draft of the Code of Practice on marking and labelling of AI-generated content. This version, drafted by independent experts, integrates written feedback from hundreds of participants and observers — including industry, academia, civil society, and other stakeholders — gathered through an EU survey, stakeholder meetings, and workshops held in January 2026. The draft also incorporates contributions from Member States submitted via the AI Board and from Members of the European Parliament represented in the IMCO-LIBE working group monitoring AI Act implementation.

A public consultation on the second draft is open until March 30, 2026. The code is expected to be finalised by the beginning of June 2026. The rules covering the transparency of AI-generated content will become applicable on August 2, 2026.

Article 50 of the EU AI Act states that companies must inform users when they are interacting with an AI system — unless this is obvious or the AI is being used for legal purposes like crime detection. AI systems that create synthetic content, such as deepfakes, must mark their outputs as artificially generated.

The AI Act establishes a horizontal set of transparency obligations aimed at mitigating risks of deception, manipulation, and misinformation arising from generative AI. Specifically, Article 50 requires that outputs of generative AI systems be identifiable as AI-generated or manipulated, and that users be informed where content constitutes a deepfake or where AI-generated text is published to inform the public on matters of public interest.

The transparency obligations under Article 50 will become fully applicable 24 months after the AI Act enters into force — i.e., in August 2026. While recent discussions suggest a limited six-month grace period for systems already on the market by that date, this buffer will likely not apply to new systems released after the deadline.

The transparency obligations in Article 50 of the AI Act will complement other rules like those for high-risk AI systems or general-purpose AI models. The Commission is also preparing, in parallel, guidelines to clarify the scope of the legal obligations and to address aspects not covered by the Code.

The first draft emerged from two Working Groups established in November 2025. The drafting process incorporated 187 written submissions from a public consultation, three workshops, and a review of expert studies.

On January 14, 2026, the Working Group on Marking and Detection Techniques (WG1) examined the code's first section. Discussions focused on state-of-the-art marking techniques — including watermarking and metadata-based solutions — detection capabilities and their technical limitations, interoperability and standardisation challenges, as well as robustness and resilience against removal. On January 22, a WG2 workshop examined the proposed taxonomy distinguishing "AI-generated" from "AI-assisted" content, proportionality in labelling requirements, and the responsibilities of online platforms and other intermediaries. A joint WG1 and WG2 workshop that same day explored the interplay between marking and labelling obligations.

Graphic made with Nano Banana (click image to enlarge)

The draft code explicitly rejects the idea that a single technical solution could satisfy Article 50 in all cases. Instead, it promotes a multilayered approach combining visible disclosures with invisible or machine-readable techniques — such as metadata or watermarking — in order to improve resilience against removal or manipulation.

Key commitments include a revised two-layered marking approach involving secured metadata and watermarking, optional fingerprinting and logging, and protocols for detection and verification.

Metadata embedding (e.g., using C2PA standards) is the standard approach, though fragile since metadata is easily stripped when a user takes a screenshot or uploads content to certain platforms. Imperceptible watermarking "interwoven" with the content is used as a hardening layer and must be robust enough to withstand common transformations like compression or cropping. Fingerprinting or logging serves as a fallback where active marking fails.

Providers are also expected to make available a free-of-charge interface—such as an API or user interface—or a publicly available detector to enable users and third parties to verify, with confidence scores, whether content has been generated or manipulated by their AI system or model.

Compared to the first draft, the second version removes and consolidates several measures and introduces optional elements while ensuring that all measures remain technically feasible and proportionate.

Labelling Deepfakes and Public-Interest Text

Section 2, targeting deployers of AI systems, focuses on labelling deepfakes and text publications concerning matters of public interest. It has been restructured to simplify and streamline commitments, while the taxonomy distinguishing AI-generated content from AI-assisted content has been completely removed. It now features design and placement requirements applicable to icons, labels, or disclaimers, ensuring a minimum level of uniformity while enabling signatories to adapt solutions to their needs.

For live video, a continuous visual indicator alongside an opening disclaimer is required. For recorded video, a visible indicator or disclaimer suffices. For AI-generated text on matters of public interest, disclosure is mandatory unless a human takes full editorial responsibility — in which case the deployer must document the identity of the person carrying that editorial responsibility.

Proportionate treatment is permitted for artistic, fictional, or satirical works, while AI involvement must still be disclosed. The code also defines specific regimes for content under human review or editorial control.

The annex of the second draft now includes illustrative examples of a potential EU icon to be made freely available to signatories. These examples will be discussed with stakeholders as part of the next set of workshops. The section also proposes a task force to develop a future, uniform, interactive EU icon, with discretionary support from signatories.

The code promotes the use of open standards for AI content marking and the EU icon for labelling to simplify compliance and reduce costs. Interim solutions, such as the label "AI", are permitted while the uniform icon is being finalised.

The Deepfakes Problem

The Code of Practice applies only to lawful deepfakes — meaning content that does not in itself constitute a violation of the law. It does not replace criminal law enforcement. In early 2026, French authorities launched an investigation into the dissemination of non-consensual sexually explicit deepfakes generated using Grok, X's artificial intelligence system. The images, which digitally "undressed" women and teenagers without their consent, were reported by French government ministers as manifestly illegal. This case does not primarily concern a failure to label AI-generated content; it highlights a failure of content moderation and removal of illegal content—situations where the Code of Practice is of limited relevance, while effective enforcement of the DSA and national criminal laws becomes decisive.

The Information Technology Industry Council (ITI), representing major global technology companies, has called for the code to avoid prescribing specific marking and detection techniques, arguing that no single solution is suitable for all types of generative AI systems.

Stakeholders also disagree on openness: providers fear reverse-engineering of marking systems, while civil society campaigns demand auditable formats. The Commission frames voluntary guidelines as an interim step until formal European standards emerge.

A key dynamic to watch will be the list of signatories. Just as with the General-Purpose AI Code of Practice, major global AI providers are expected to sign first, particularly regarding provider obligations under Article 50. Their participation will effectively set the technical standard: once major providers agree on a specific watermarking or metadata standard, such as C2PA, it will likely become the de facto market requirement, making it difficult for smaller players to deviate.

Although voluntary, the Code of Practice on Transparency of AI-Generated Content is likely to become a key reference point for regulators and courts when assessing compliance with the AI Act's transparency obligations.

The draft code makes clear that the transparency requirements of Article 50 should be implemented on time, even though they will not be binding until August 2026. Providers and operators should therefore already take it into account when designing their compliance measures, as subsequent implementation is only possible to a limited extent, both technically and organisationally.

A key risk flagged by legal experts is the proposed EU AI Digital Omnibus initiative, which could alter or delay certain aspects of the AI Act framework, potentially affecting this timeline.

This article has been written with the use of AI and fact-checked, curated and edited by the CU's editorial team